What Is the Core Essence of AI Technology?

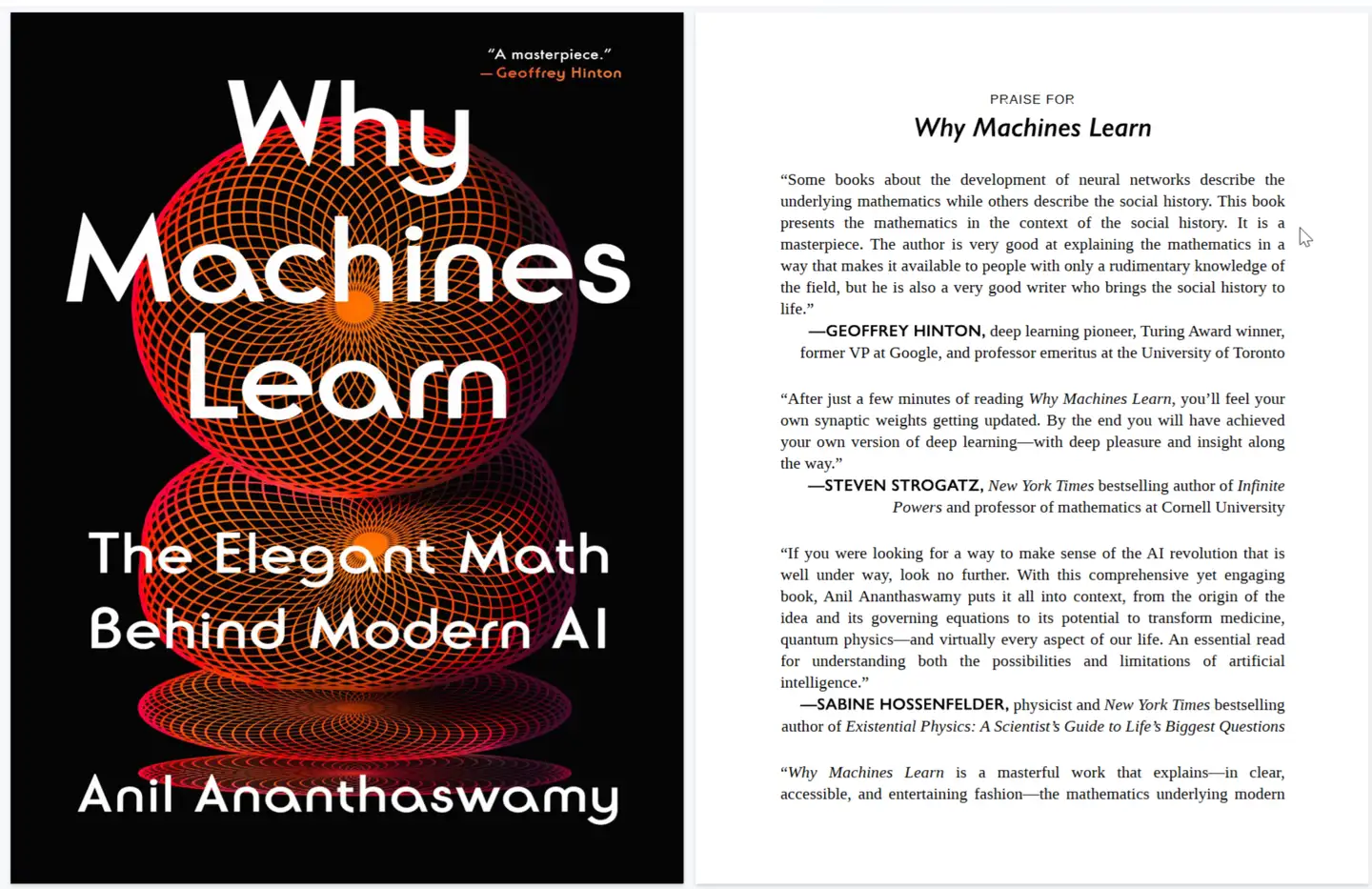

There is a popular science book dedicated to explaining the principles behind AI technology titled Why Machines Learn: The Elegant Math Behind Modern AI, commonly translated into Chinese as 《人工智能背后的数学原理》. This book does not cover specific AI algorithms or the coding aspects of AI implementation; it is a purely narrative popular science work, making it highly suitable for readers outside the AI industry who have an interest in AI.

The author, Anil Ananthaswamy, is a professional science communicator skilled at presenting complex scientific ideas in accessible language. The book provides a detailed explanation of the mathematics behind machine learning, and it is recommended that readers have a foundational understanding of linear algebra, probability, and related basic mathematics.

This book helps explain why AI can autonomously generate content through the following mathematical pillars:

-

- Linear Algebra: Representing massive datasets as vectors and defining the model’s representational space.

-

- Calculus: Optimizing the model.

-

- Probability: Enabling the model to make decisions (i.e., generate output).

To put it more succinctly, by training on known data, a parameterized function of enormous scale (trillions of parameters) is determined within the representational space. This function is called the model, which is then made available for user interaction. When a user queries the AI, it corresponds to the function’s input; the AI’s response corresponds to the function’s output. Of course, this function is unlike ordinary mathematical functions—it is constructed from layered data in a high-dimensional space and can only be fine-tuned by humans, not modified at will as ordinary functions are.

Why do we say the model “determines a function” rather than “generates a function”? Does this imply the function inherently exists and is simply discovered by humans? The fitting process of machine learning indeed resembles the search for an optimal function, suggesting that once data is prepared, this function is effectively already present.

The book also provides a brief introduction to the Transformer architecture, which is the prevailing general framework for current mainstream models. However, it acknowledges that Transformers have many drawbacks, and it is believed that future architectures with lower computational complexity will emerge.